The History of Artificial Intelligence began in the 1950s when British scientist Alan Turing asked a revolutionary question: “Can machines think?”

This simple question marked the beginning of Artificial Intelligence — an incredible journey that transformed computers from simple calculators into intelligent systems capable of learning, reasoning, and even creating.

Today, when we talk about AI (Artificial Intelligence), it feels like magic — machines talking, thinking, even creating art!

But this didn’t happen overnight. The history of AI is a fascinating story — from understanding the human brain to teaching machines how to think.

The story begins in the 1950s, when British scientist Alan Turing asked a revolutionary question:

“Can machines think?”

With this simple question, the idea of Artificial Intelligence was born.

Turing even designed the famous Turing Test — a way to check if a machine could imitate human-like intelligence.

This question was not just philosophical — it laid the foundation for everything that would follow in computer science and AI.

Turing suggested that instead of asking if a machine can think, we should ask if it can act in a way that seems intelligent.

This led him to design a thought experiment that would later become famous as the Turing Test.

🧩 What Is the Turing Test?

The Turing Test was a simple but brilliant idea.

Turing imagined a scenario where a human interrogator communicates with two unseen participants —

one is another human, and the other is a machine.

If the interrogator cannot reliably tell which one is the machine, then that machine can be said to “think.”

In other words, the Turing Test was the first real benchmark for Artificial Intelligence —

a way to measure whether a computer could imitate human reasoning and conversation.

💡 Why Turing’s Question Still Matters Today

Even more than 70 years later, Turing’s question — “Can machines think?” — continues to guide AI research.

Every chatbot, virtual assistant, and large language model (like ChatGPT or Gemini) is, in some way, an answer to that question.

Turing didn’t live to see his dream realized, but his vision shaped the entire field of AI.

He showed the world that intelligence isn’t just a human trait — it can also be designed, coded, and learned.

🧩 1956: The Birth of Artificial Intelligence

In 1956, during the Dartmouth Conference, the term “Artificial Intelligence” was officially used for the first time.

A few visionary scientists dreamed of building machines that could learn, reason, and make decisions like humans.

🧠 The Vision Behind the Conference

The idea for the conference came from John McCarthy, a young and visionary computer scientist at the time.

He collaborated with three other leading thinkers:

- Marvin Minsky (Harvard University)

- Claude Shannon (the father of Information Theory)

- Nathaniel Rochester (IBM scientist)

Together, they wrote a short proposal to the Rockefeller Foundation — just two pages long — but it contained an idea that would reshape human history.

Here’s the exact line that changed everything:

“The study is to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

In simple terms, they believed that —

if we can understand how humans think, we can teach machines to do the same.

💬 Who Attended the Conference?

Though they invited over a dozen scientists, only ten actually attended the workshop — including:

- John McCarthy (Dartmouth College)

- Marvin Minsky (Harvard)

- Claude Shannon (Bell Labs)

- Herbert Simon and Allen Newell (Carnegie Tech)

- Nathaniel Rochester (IBM)

The participants spent six intense weeks brainstorming, debating, and building ideas around how machines could reason, learn, and even use language.

⚙️ Key Topics Discussed

They explored several themes that are still relevant today:

- Neural networks – how to build systems inspired by the human brain

- Machine learning – making computers improve automatically through experience

- Natural language understanding – teaching computers to comprehend human language

- Automated reasoning – enabling computers to solve logical problems step-by-step

It’s amazing that these ideas — formed almost 70 years ago — are the foundation of what we call AI today.

🧩 Why It Was Revolutionary

Before this conference, computers were seen as mathematical tools — calculators for crunching numbers.

But the Dartmouth Conference redefined their purpose.

For the first time, scientists saw computers as thinking machines —

capable of reasoning, understanding, and decision-making.

The group didn’t achieve all their goals right away, but they set the direction for decades of AI research that followed.

🌍 What Happened After Dartmouth

The Dartmouth Conference didn’t end AI’s story — it started it.

The attendees went on to become legends in the field:

- John McCarthy created the programming language LISP, which became the standard for AI development for years.

- Marvin Minsky founded the MIT AI Lab, one of the most influential AI research centers.

- Herbert Simon and Allen Newell developed the Logic Theorist, the first program that could mimic human problem-solving.

These pioneers proved that intelligence could be replicated — not just imagined.

🧭 Legacy of the Dartmouth Conference

The Dartmouth Conference taught one powerful lesson:

“AI is not just a technology — it’s an idea about understanding intelligence itself.”

It marked the moment when Artificial Intelligence evolved from science fiction into scientific research.

Every innovation you see today — from ChatGPT to self-driving cars — traces its roots back to that small classroom in 1956.

💡 1980–2000: The Age of Expert Systems

In the 1980s, as computer power improved and data storage became cheaper, AI research saw a new wave of hope.

Expert Systems were created to make decisions like doctors or engineers.

However, due to limited data and slow computing, AI couldn’t achieve its full potential yet.

⚙️ What Are Expert Systems?

An Expert System is a type of computer program designed to mimic the decision-making abilities of a human expert.

In simple terms, it was like storing a specialist’s brain inside a computer.

It worked using two main parts:

- Knowledge Base: A database containing facts and rules — for example, “If the engine doesn’t start and the battery is fine, check the spark plugs.”

- Inference Engine: The logic system that applies those rules to reach conclusions — like a human reasoning process.

This made Expert Systems the first real-world application of Artificial Intelligence.

🧠 How They Changed the World

In the 1980s, companies realized AI could save time, reduce errors, and automate expert-level decisions.

Some major examples include:

- 🖥️ XCON (by Digital Equipment Corporation, 1980):

One of the earliest and most successful Expert Systems.

It helped configure complex computer orders automatically, saving the company millions of dollars per year. - 💊 MYCIN (Stanford University, 1972–1980):

A medical Expert System that could diagnose bacterial infections and recommend treatments with doctor-level accuracy. - 🏭 DENDRAL (Stanford, early 1970s):

Used in chemistry to identify molecular structures from spectral data — another early success story.

By the mid-1980s, almost every large corporation wanted its own AI system.

Banks, airlines, hospitals, and even the military started investing in AI to solve domain-specific problems.

💼 The Boom: AI Becomes a Business

The success of Expert Systems created a wave of AI startups.

Major computer companies like IBM, Xerox, and DEC launched AI divisions.

Japan even launched a $400 million project called “Fifth Generation Computer Systems (FGCS)” in 1982,

aiming to create computers that could reason and process natural language.

In the U.S., DARPA invested heavily in similar research.

AI became a buzzword again — just like today’s “Generative AI” — and everyone wanted a piece of it.

🧩 The Problems Begin

But by the late 1980s, cracks began to appear.

Despite the hype, Expert Systems had serious limitations:

- ❌ Too Expensive: Building and maintaining them cost millions.

- ❌ Rigid Logic: They couldn’t adapt to new situations outside their rule set.

- ❌ Data Dependence: They required manual input of every rule — something nearly impossible for complex fields.

- ❌ No Learning Ability: Unlike modern AI, Expert Systems couldn’t “learn” from data — they only followed what was programmed.

For example, if a new type of disease appeared, the system had no idea how to handle it unless a human manually updated its rules.

❄️ The Fall: The Second AI Winter (Late 1980s–1990s)

By 1987, the excitement around Expert Systems began to fade.

Companies realized these systems were hard to maintain, slow, and couldn’t scale.

When Japan’s FGCS project failed to meet its goals and U.S. investors saw no immediate profit, funding dried up again.

This led to what’s known as the Second AI Winter (1987–1993) —

a time when AI was once again labeled “overhyped and overpromised.”

But this winter was different — because data, hardware, and algorithms were slowly improving behind the scenes.

🚀 The Legacy: What Expert Systems Gave Us

Even though Expert Systems failed commercially, they laid the foundation for modern AI:

- The concept of knowledge representation evolved into data-driven AI.

- The structure of rule-based logic inspired early machine learning algorithms.

- The demand for faster processing pushed computer hardware innovation forward.

In short, Expert Systems showed us what AI could do — and what it couldn’t.

Their limitations directly inspired the next generation of AI researchers to find new approaches —

which led to the rise of Machine Learning and Deep Learning in the 2000s.

History of Artificial Intelligence illustration

🚀 2010 and Beyond: The Era of Machine Learning

After 2010, the real revolution began.

With the rise of Machine Learning and Deep Learning, machines started learning from data — not just following instructions.

Tech giants like Google, Amazon, and Facebook integrated AI into their systems.

Now, machines could recognize images, understand speech, and even predict human behavior.

— all thanks to big data, powerful GPUs, and the rise of machine learning.

- Machine Learning (ML) allowed computers to learn from data instead of being explicitly programmed.

- Algorithms like Random Forests, Support Vector Machines, and especially Deep Neural Networks became game changers.

- In 2012, AlexNet, a deep learning model, revolutionized image recognition by outperforming humans in accuracy. This event marked the true beginning of the Deep Learning era.

- Tech giants like Google, Facebook, and Microsoft started using AI for everything — from search recommendations to voice assistants like Siri, Alexa, and Google Assistant.

- Natural Language Processing (NLP) models such as BERT, GPT, and T5 gave machines the ability to understand and generate human-like language.

Now, in the 2020s, AI has moved beyond automation — it’s creating art, writing code, diagnosing diseases, and even powering generative AI tools like ChatGPT and DALL·E.

The AI revolution is not coming — it’s already here. 💥

The real game-changer came in 2012, when AlexNet, created by Geoffrey Hinton and his students, won the ImageNet competition by a huge margin.

It used deep convolutional neural networks (CNNs) — inspired by the human brain’s visual system — to recognize images better than any previous algorithm.

That victory marked the birth of Deep Learning dominance.

Soon after, similar architectures powered:

- Self-driving cars (by Tesla, Waymo)

- Facial recognition systems

- Voice assistants like Siri and Alexa

- Recommendation engines for YouTube, Netflix, and Amazon

💬 The Rise of Language Models

The History of Artificial Intelligence: How It All Started , While Deep Learning changed vision, another revolution was brewing in Natural Language Processing (NLP).

Earlier models like Word2Vec and GloVe taught computers how to understand word meanings.

Then came the Transformer architecture (2017) — introduced by Google’s paper “Attention Is All You Need.”

This architecture powered models like:

- BERT (2018) — for understanding language

- GPT series (2018–2023) — for generating language

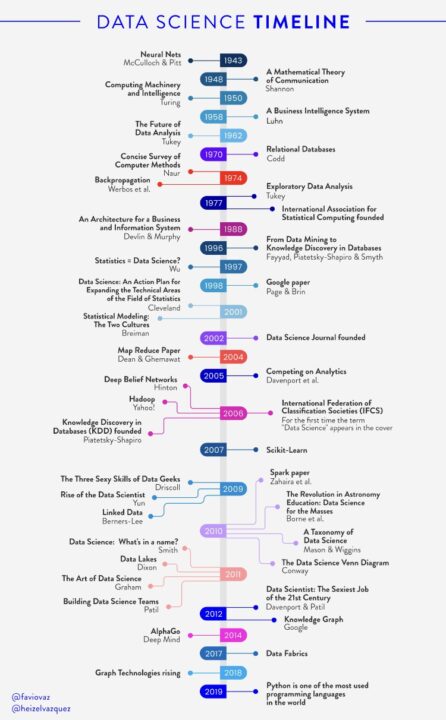

These models could write essays, answer questions, translate languages, and even have conversations — just like humans. Evolution of AI from 1950 to 2020 you can see in below picture.

🧩 AI Everywhere

By the late 2010s, AI became embedded in daily life:

- Your phone’s camera uses AI for portrait effects

- Google Maps uses AI to predict traffic

- Banks use AI to detect fraud

- Healthcare uses AI to read X-rays and predict diseases

AI had officially moved from labs to life.

🔮 The 2020s: Generative AI Era and AI evolution timeline infographic

In the 2020s, AI evolved from learning to creating.

Generative models like DALL·E, Stable Diffusion, and ChatGPT showed that AI could:

- Generate art and music 🎨

- Write stories, code, and even poetry ✍️

- Simulate conversations, summarize data, and automate workflows 🤖

We entered the Generative AI Revolution, where creativity meets computation. you can see the below image which is describe the evolution of AI. This is also tells the history of machine learning.

🤖 The Modern Age: Generative and Agentic AI

Today, we’ve entered the age of Generative AI and Agentic AI —

machines that can create, converse, and even think strategically.

From ChatGPT and Gemini to Claude, AI is helping humans write, design, code, and innovate like never before.

🧠 How Generative AI Works

Generative AI is powered by deep neural networks trained on billions of text and image examples.

They use architectures like:

- Transformers (the backbone of GPT, BERT, etc.)

- Diffusion Models (used in tools like DALL·E and Midjourney)

These systems predict what comes next — a word, a pixel, or a musical note — and build complex, realistic creations step by step.

⚡ The Next Leap: Agentic AI

Now, we’re entering the Agentic AI Era — where AI isn’t just a tool, it’s an autonomous assistant that can think, plan, and act.

Unlike traditional chatbots that wait for user input, Agentic AI can:

- Take initiative — set goals and execute tasks independently

- Use external tools like browsers, APIs, and databases

- Collaborate with other AIs for multi-step workflows

- Learn from feedback and improve over time

Think of it as AI moving from conversation → to action.

For example:

- A marketing agent that plans and launches an entire campaign

- A coding agent that builds and tests apps automatically

- A finance agent that analyzes market data and executes trades

🌍 AI as a Partner, Not Just a Tool

Generative and Agentic AI together mark the shift from Artificial Intelligence to Augmented Intelligence — where humans and machines collaborate.

AI is becoming a partner that helps us:

- Make better decisions

- Automate repetitive work

- Unlock creativity and innovation

- Generative AI and modern machine learning illustration

This is the foundation for a new digital era, where intelligent systems enhance every aspect of life — from education and healthcare to finance, entertainment, and beyond.

🔮 The Road Ahead

The modern AI revolution is still unfolding.

The next challenges lie in:

- Ethics and responsibility — ensuring fairness, privacy, and transparency

- Energy efficiency — reducing the cost of massive AI models

- Integration — connecting Agentic AIs into real-world systems safely

But one thing is certain —

We’ve entered a time where AI doesn’t just understand the world… it helps shape it.

🌍 The Future of AI: Working with Humans, Not Against Them

AI is now present in every industry — healthcare, finance, sports, and education.

Its true purpose isn’t to replace humans, but to enhance human capabilities.

The future belongs to those who understand AI and learn to use it wisely.

🧠 Human Intelligence + Artificial Intelligence = Augmented Intelligence

AI is no longer just about automation — it’s about augmentation.

That means helping humans do things faster, smarter, and better, not replacing them.

Examples:

- Doctors using AI to detect diseases earlier and more accurately 🏥

- Teachers using AI tools to personalize learning for every student 🎓

- Businesses using AI to make data-driven decisions 📊

- Artists and writers using AI to enhance creativity 🎨✍️

AI becomes a co-pilot, not a competitor — extending human capabilities beyond our limits.

⚙️ Ethical AI: Building Trust in the System

As AI becomes more powerful, trust and ethics will decide its success.

We must ensure that AI is:

- Transparent — so people understand how it works

- Fair — so it doesn’t discriminate

- Accountable — so humans stay in control of critical decisions

- Private and secure — so our data stays safe

Responsible AI development will create systems that the world can truly rely on.

🤝 Humans at the Center

The future of AI is human-centered.

That means AI systems will adapt to human needs, emotions, and values — not the other way around.

Imagine a world where:

- AI assistants manage your daily tasks seamlessly

- AI agents help solve climate change through smarter energy use

- AI-guided robots assist the elderly or disabled with compassion and care

This is not science fiction anymore — it’s the direction we’re heading.

🔮 Looking Ahead

The journey of AI — from Alan Turing’s first question to today’s generative and agentic systems — shows one clear truth:

Every leap in AI has always started with human imagination.

As we step into the next decade, the goal is clear —

To build AI that amplifies humanity, not replaces it.

AI that helps us create, heal, and connect — a future where humans and machines grow together.

Understanding the History of Artificial Intelligence helps us see how far technology has come with

Comment on this and give your view on Evolution of AI from 1950 to 2020 and history of machine learning.

REFRENCES:

- https://www.intel.com/content/www/us/en/learn/ai-use-cases.html?cid=sem&source=sa360&campid=2025_ao_india_in_comm_eai_eahqr_rep_awa_cons_txt_gen_broad_goog_is_intel_hq-learn-entai-obs_fc25023&ad_group=Gen_AI-UseCases-Learn-eai_b2b1-bp_Broad&intel_term=machine+learning+use+cases&sa360id=297908291241&gclsrc=aw.ds&gad_source=1&gad_campaignid=22224081557&gbraid=0AAAAA9YeOQTgUFH1Ez7tHH7Y3Ab2HmqaM&gclid=Cj0KCQjwmYzIBhC6ARIsAHA3IkTghSdvNEddAnp4fHJQaGUhv5clohaUeaHKZqsvl5FHYh4hup3t4G4aAq57EALw_wcB

- https://hbr.org/2023/08/ai-wont-replace-humans-but-humans-with-ai-will-replace-humans-without-ai?utm_medium=paidsearch&utm_source=google&utm_campaign=intlcontent_bussoc&utm_term=Non-Brand&tpcc=intlcontent_bussoc&gad_source=1&gad_campaignid=20712984896&gbraid=0AAAAAD9b3uSL5ow4uCCEWPuDWdDJNfoEa&gclid=Cj0KCQjwmYzIBhC6ARIsAHA3IkTdsyUz0oDDlf2uf_4koEhFVxyNKcA8eozDQVLbACa3tcsBTAe32ygaAt8lEALw_wcB

🧠 Want to see where this journey is heading next? Read our upcoming article — ttps://bharatnewsai.com/the-future-of-artificial-intelligence/

1. Who started Artificial Intelligence?

Alan Turing is considered the pioneer of AI. In the 1950s, his famous question “Can machines think?” laid the foundation for artificial intelligence research.

2. When did AI become popular?

AI gained real popularity after the 2000s with the rise of big data, machine learning, and advanced computing power.

3. What is the future of Artificial Intelligence?

The future of AI lies in generative and agentic systems that can think, learn, and make decisions independently — reshaping industries and human life.

I confirm. I join told all above. We can communicate on this theme.

——

https://the.hosting/it/32-gb-ram-dedicated-server